Acoustic Camera

The idea is to build a camera capable of capturing images using sound as the illumination source rather than light. There are a number of ways to create images from sound, the most common of which usually involve phased arrays of some sort. This is how medical ultrasound and certain types of radar are often done, and the approach has many advantages. Another method is more similar to a light camera, except that the sound waves are focused like light waves by a simple type of lens, such as a pinhole or a zone plate. This type of camera would be extremely large, possibly filling a small room for even relatively high audible frequencies. It would require far more circuitry and have some other disadvantages, but it has a certain old-school retro cool factor about it. Current Status

January 4, 2013

A couple of weeks ago Brendan let me use some of his audio gear to capture some preliminary data from a true mic array, rather than simulating it. At the time we were only able to set up 4 mics, and it turned out the gain was way low on one of them, such that it produces extremely coarse audio after normalization. Still, the exercise was very successful and useful. I positioned the mics by eyeball using a tape measure, shooting for about 6", which is close to the half-period for 1kHz sound in air at sea level. By capturing a loud sound to the side of the array ("end-fire", in array parlance) and comparing the delays in the signal it was easy to determine their actual spacing to good resolution (millimeter or less). I wish the images I'm producing were more crisp but I am able to successfully produce animations of the locations of hand claps. Comparing the results from the actual array against the same hand-claps placed in simulated locations, the simulated data is better but this can probably be largely attributed to factors which we can do better on in the future, such as matching the mics, capturing data in a more far-field setup, etc. Other factors such as background noise and multipath may prove more troublesome. Adding more mics should help improve the directionality, and this is evident in simulations. Finding a moving 1kHz tone (generated by my cell phone) proved somewhat iffy, possibly due to the ambiguity. One thing I'm having some difficulty with is the appropriate bandwidth -- higher bandwidth should result in better matches, but frequencies with half-periods greater than the mic spacing results in defocussing and frequencies with half-periods lower than the mic spacing results in grating lobes, so it seems like a catch-22.

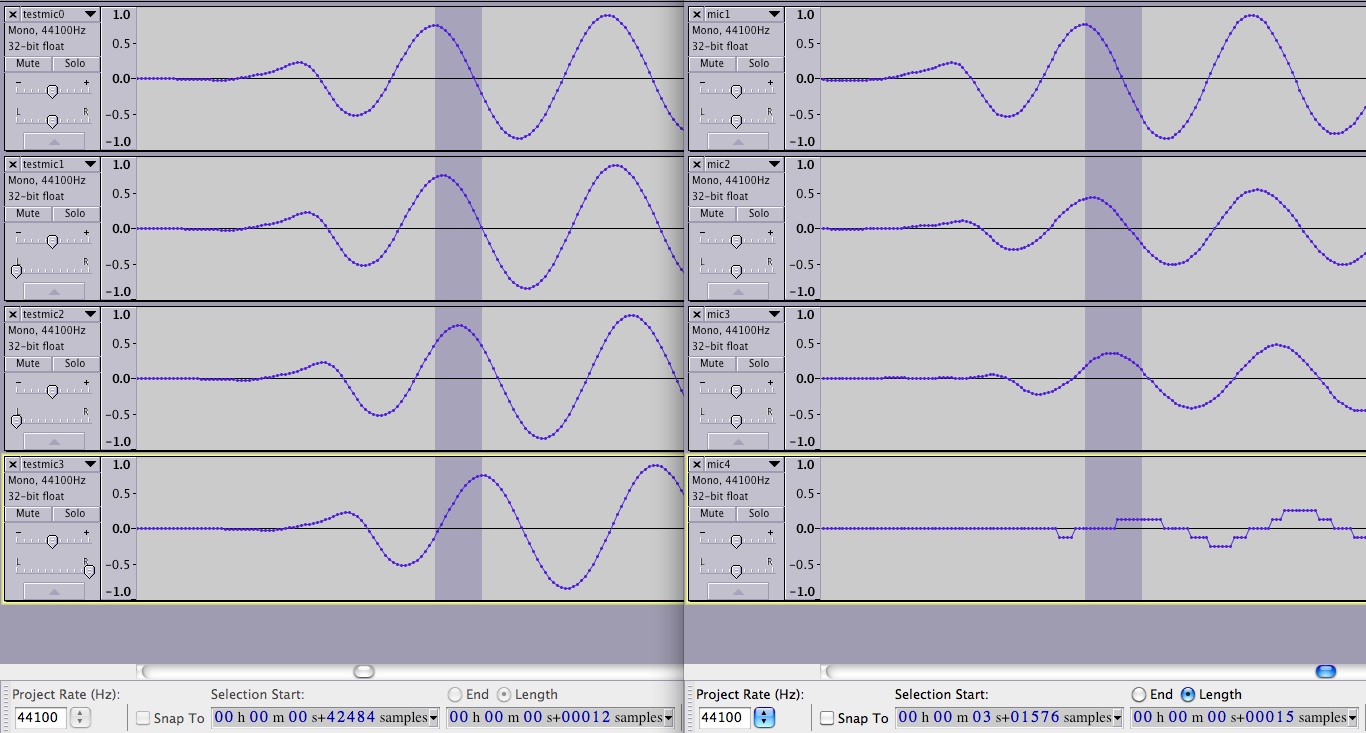

Following is a picture of 4 waveforms (left side) of my simulation, and 4 waveforms (right side) of actual recorded array data. In the "real" data you will notice the near-field effects which show up as mismatch in the amplitudes between the mics. You will also see channel 4, which had its gain set way too low. Currently my code doesn't model sound attenuation with distance so it will work better with far-field sounds.

Following are 4 animations all frozen on a frame showing the beginning of a hand-clap, located just slightly left of center relative to the array's forward direction. I simulated it for 2-, 4-, 8-, and 16 mics to demonstrate just how much more directional it gets as you increase the number of mics. Four mics is a very small array, but it is enough to prove out our code. The current results of the 4-mic array actually closer resemble the 2-mic simulated data unfortunately, but we hope to improve on this with better process.

Following are 4 animations all frozen on a frame showing the beginning of a hand-clap, located just slightly left of center relative to the array's forward direction. I simulated it for 2-, 4-, 8-, and 16 mics to demonstrate just how much more directional it gets as you increase the number of mics. Four mics is a very small array, but it is enough to prove out our code. The current results of the 4-mic array actually closer resemble the 2-mic simulated data unfortunately, but we hope to improve on this with better process.

I've currently got 3 ways to evaluate the intensity of a pixel of an animation for the acoustic camera:

I've currently got 3 ways to evaluate the intensity of a pixel of an animation for the acoustic camera:

- Sum of absolute values: simply add up the absolute values of all the samples in the time window of interest

- Sum of squares: add up the squares of all the samples of interest. This improves the sharpness somewhat

- Max matched filter response: takes a template for a sound that you're looking for and tells you how closely it matches. This requires that you have some idea of what you are searching for: this is probably most useful in situations like active sonar, where a known chirp is emitted. In theory a known chirp is all I need to do active sonar right now, I look forward to trying it out some time.

December 15th, 2012 I've gotten a start on the software to prove the concept. Currently it can do the following:

- Take in pre-recorded mono sounds, source locations, and a description of a mic array, and it produces simulated audio data for all mic channels

- Read in audio data, performing simple delay-and-sum beamforming of the sound waves to produce an output pixel

- Export a series of images or GIF animation of the audio sources as seen through the "sound camera", with brightness mapping to sound intensity

The code is extremely slow, partly due to lack of optimization, partly due to being written in Java, partly because it doesn't yet take advantage of multiple processor cores or GPUs, and partly because it's just a lot of processing! But it is looking like a great start.

Hopefully soon I can add color as a way of showing different frequencies. It is likely that the frequency range will be fairly limited though.

Below is an example GIF animation created of a half-second sound. The simulated sound sources are different recordings of my voice ("testing" and "experiment"), placed at locations 10 meters away. The array itself has full 360-degree field of view but for the sound camera I chose 80 degrees horizontally and vertically. The source sounds are placed 10 meters away from the camera, one towards the top left ("testing") and the other towards the bottom right ("experiment"). The simulated array itself in this animation consists of 8x8=64 mic elements, quite large and processing intensive. We may try and see how far back we can cut that in order to get it semi-realtime, or we may just not worry about it taking a long time to process.

I'm no expert, but I believe we primarily see two types of artifacts in this animation: blurriness of the sound source, and apparently bright areas where there is no sound source. I believe the first is due primarily to processing sounds whose frequency is lower than the array was design for. The second, which I believe are called grating lobes, are due to the opposite problem: processing sounds too high in frequency for the design of the array. To combat the first problem we can make the array larger, and for the second problem we can increase the density of the array by decreasing the array spacing. Appropriate bandpass filtering should go a long way towards helping both problems. Of course the ideal phased array would have an infinite number of mic elements, and this would make it functionally identical to a parabolic reflector with the advantage that it could be steered rapidly in software. Available processing power limits the number of mics that are practical, though.

Questions to answer / Decisions to make

I'm no expert, but I believe we primarily see two types of artifacts in this animation: blurriness of the sound source, and apparently bright areas where there is no sound source. I believe the first is due primarily to processing sounds whose frequency is lower than the array was design for. The second, which I believe are called grating lobes, are due to the opposite problem: processing sounds too high in frequency for the design of the array. To combat the first problem we can make the array larger, and for the second problem we can increase the density of the array by decreasing the array spacing. Appropriate bandpass filtering should go a long way towards helping both problems. Of course the ideal phased array would have an infinite number of mic elements, and this would make it functionally identical to a parabolic reflector with the advantage that it could be steered rapidly in software. Available processing power limits the number of mics that are practical, though.

Questions to answer / Decisions to make

- Acoustic pinhole / zone plate camera?

- What frequency of audio to use?

- 10kHz might be a good target. It's still audible, but the wavelengths are small enough that the camera won't take up a whole building!

- Partly depends on whether we want to capture ambient sound or if we can illuminate the scene ourselves with sound sources

- How to capture all that data?

- Perhaps implement a bucket brigade circuit similar to a CCD

- Perhaps give each pixel an envelope detector so you don't have to sample each microphone at audio frequencies

- This would eliminate any possibility of doing further processing, such as using an FFT to separate the audio from each mic into different frequency components to display in different colors

- Mechanically sweep a few microphones to build up the image over time

- What frequency of audio to use?

- Acoustic phased array?

- What sort of array design? Grid? Spiral?

- What type of circuitry topology?

- Perhaps an array of boards, each board having enough circuitry to record 8 channels. Boards are all slaved to a single computer which does all the processing

Example implementation

- Frequency: 10kHz (wavelength 33mm / 1.3")

- Microphone imaging array: 32x32 = 1024 microphones!

- Number of zones: 5 (3 open zones including center pinhole)

- Pixel pitch (distance between each mic): 2*wavelength (~67mm / 2.6")

- Focal length of zone plate: 2m (~79")

- Actual distance required to focus on subject 10m away: 2.5m (98")

- Imaging array physical size: 2.1m (~84")

- Field of view: 56 degrees

If we could sample 16 channels at a time and sampled for 100 periods (10ms) with 5ms dead time between switching microphones, it would take about a half second to build up one frame. Current thinking:

A phased array approach is in some ways less cool, but is more practical in several ways (smaller, reduced circuitry requirements, higher frequency bandwidth, etc). A modular design could require relatively minimal circuitry development and fab:

- Custom sound capture PCB designed

- 8-channel ADC such as the ADS1178

- Preamp circuitry for 8 electret microphones

- Electret mics are extremely cheap and can be wired offboard to an array frame without much worry about interference

- Single SPI interface

- Single array frame holds all mics from different boards together in a known geometry

- Existing logic board (FPGA or possibly DSP) connects multiple sound capture boards together, combining synchronized SPI streams from each board

- FPGA is ideal because processing complexity is minimal but I/O is high, need a separate SPI bus for each board

- Logic stores sound onto SD card for later retrieval and processing

- PC loads data file containing all mic channels, performs simple delay-and-sum beamforming to construct an image or animation of the sound

- FFT could be used to extract frequencies present, encode them as pixel colors